InputSplit vs Blocks in Hadoop MapReduce: In this MapReduce tutorial, we will study the comparison between MapReduce InputSplit vs Blocks in Hadoop. Initially, we will see what is HDFS data blocks next to what is Hadoop InputSplit. Then we will discuss the feature-wise difference between InputSplit vs Blocks. Finally, we will also discuss the example of Hadoop InputSplit and Data blocks in HDFS.

Introduction to InputSplit and Blocks in Hadoop MapReduce

Let us first study what is HDFS Data Blocks and what is Hadoop InputSplit one by one.

What is a Block in HDFS?

Hadoop HDFS divides large files into small chunks known as Blocks. It consists of a minimum amount of data that can be read or write. HDFS stores each file as blocks. The Hadoop application allocates the data block across multiple nodes. HDFS client doesn’t have any command on the block like block location, the Namenode decides all such things.

What is InputSplit in Hadoop?

It represents the data that individual mapper processes. Therefore the number of map tasks is equal to the number of InputSplits. The framework divides split into records, which mapper processes.

At first, input files store the data for MapReduce job. Input a file typically places in HDFS InputFormat describes how to split up and read input files. InputFormat is accountable for creating InputSplit.

Comparison Between InputSplit vs Blocks in Hadoop MapReduce

Let us now study the feature-wise difference between InputSplit vs Blocks in Hadoop Framework.

Data Representation

• Block – HDFS Block is the physical depiction of data in Hadoop.

• InputSplit – MapReduce InputSplit is a logical depiction of data present in the block in Hadoop. It is utilized during data processing in the MapReduce program or other processing methods. The important thing to focus on is that InputSplit doesn’t contain actual data; it is just a reference to the data.

Size

• Block – By default, the HDFS block size is 128MB which you can alter as per your requirement. All HDFS blocks are of similar size except the last block, which can be either the same size or smaller. Hadoop framework splits files into 128 MB blocks and then stores them into the Hadoop file system.

• InputSplit – InputSplit size by default is almost equal to block size. It is user-defined. In the MapReduce program, the user can control a split size based on the size of the data.

Example of Block and InputSplit in Hadoop

Suppose we need to store the file in HDFS. Hadoop HDFS stores files as blocks. Block is the smallest unit of data that can be stored or retrieved from the disk. The default size of the block is 128MB. Hadoop HDFS breaks files into blocks. Then it stores these blocks on different nodes in the cluster.

For example, we have a file of 132 MB. Therefore HDFS will break this file into 2 blocks.

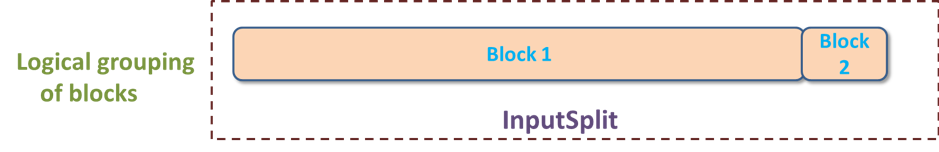

Now, if we want to perform a MapReduce operation on the blocks, it will not process. The reason is that the 2nd block is incomplete. Therefore, InpuSplit solves this problem. MapReduce InputSplit will form a logical grouping of blocks as a single block. As the InputSplit includes a location for the next block and the byte offset of the data needed to complete the block.

Conclusion

Therefore, InputSplit is only a logical chunk of data i.e. It has just the data about blocks address or location. While Block is the physical depiction of data. Now I am sure that, you have a clear understanding of InputSplit and HDFS Data blocks after reading this tutorial.